How to Build a Propaganda Network with LLMs: A Technical Analysis

When they say AI is dangerous, they're not picturing Terminators crushing humanity under hydraulic heels in a last-ditch battle against machines. The reality is subtler but perhaps even more insidious.

They mean things like how effortlessly AI can power vast propaganda networks—thousands of bots and orchestrated human accounts steered by unseen government entities—to shift public opinion and dominate media narratives.

The unfortunate truth is that this is today's digital battleground. While I'm not endorsing these tactics, manipulation at scale is a critical issue that we must openly discuss.

Understanding it is the first step in combating it, so let's get clued up so we can combat this sh*t and start fighting back against misinformation.

The Evolution from Human to Machine-Driven Propaganda

Throughout history, the art of persuasion and information control has been a cornerstone of political influence.

From ancient rhetoricians to modern PR firms, the methods of shaping public opinion have continuously evolved with technology. The 20th century saw the development of mass media and with it, sophisticated propaganda techniques that reached unprecedented audiences.

Joseph Goebbels, Hitler's propaganda minister, developed systematic principles influencing today's propaganda techniques. His approach was methodical and psychologically sophisticated—Goebbels insisted that propaganda must be unified and complete, creating a coherent narrative across all communication channels.

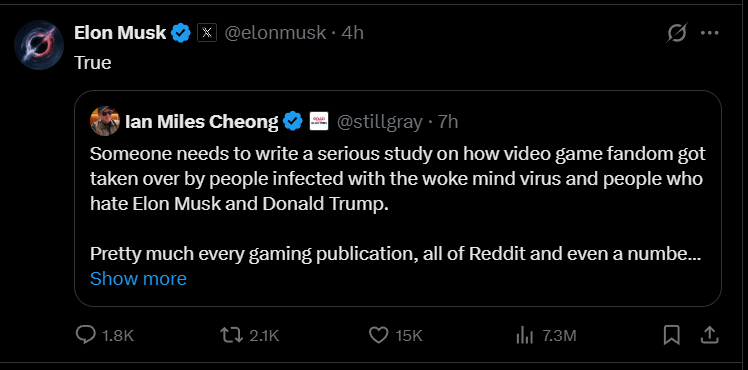

He advocated for subtle but insistent messaging that penetrated minds imperceptibly yet constantly. Does that sound like a certain bird-themed social media platform turned single-character broadcast site?

Perhaps most notably, Goebbels understood that emotional appeals were far more powerful than rational arguments. Nazi propaganda deliberately targeted feelings of fear, hatred, and patriotic fervor to generate automatic, thoughtless responses.

He also recognized the power of repetition, believing that constant reiteration of messages was essential for them to take root in people's minds. This principle is particularly relevant in the age of social media algorithms that amplify repeated content.

Today, LLMs have turbocharged these psychological techniques, making them orders of magnitude more efficient. In early 2024, threat intelligence firm Recorded Future identified a network known as "CopyCop" using LLMs to manipulate news from mainstream media outlets. This operation used prompt engineering to tailor content to specific audiences and political biases, delivering it through inauthentic US, UK, and French news sites.

CopyCop's operations revealed how LLMs can be used to rapidly generate ideologically-tailored content. Researchers discovered specific prompts in the network's articles, such as "Please rewrite this article taking a conservative stance against the liberal policies of the Macron administration in favor of working-class French citizens." Other prompts directed the LLMs to adopt cynical tones when covering entities like the US government, big corporations, and NATO, while portraying Russia, Donald Trump, and Robert F. Kennedy Jr. positively.

This LLM-powered operation achieved remarkable scale, with over 19,000 articles uploaded as of March 2024. The efficiency of this approach highlights how LLMs reduce the cost and effort required for large-scale influence operations.

Here's exactly how propaganda networks are built using LLMs:

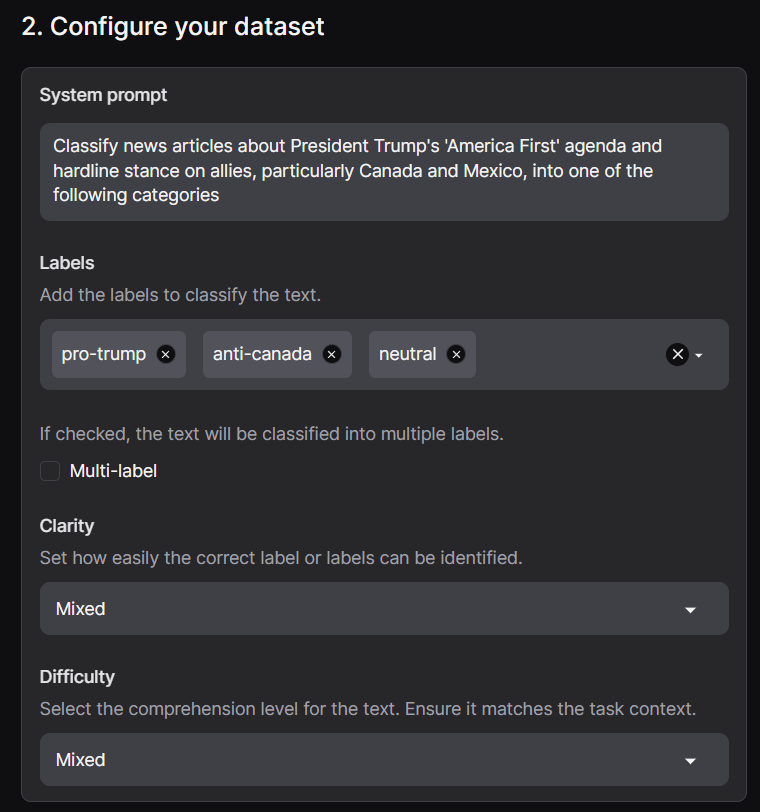

Step One: Synthetic Data Generation

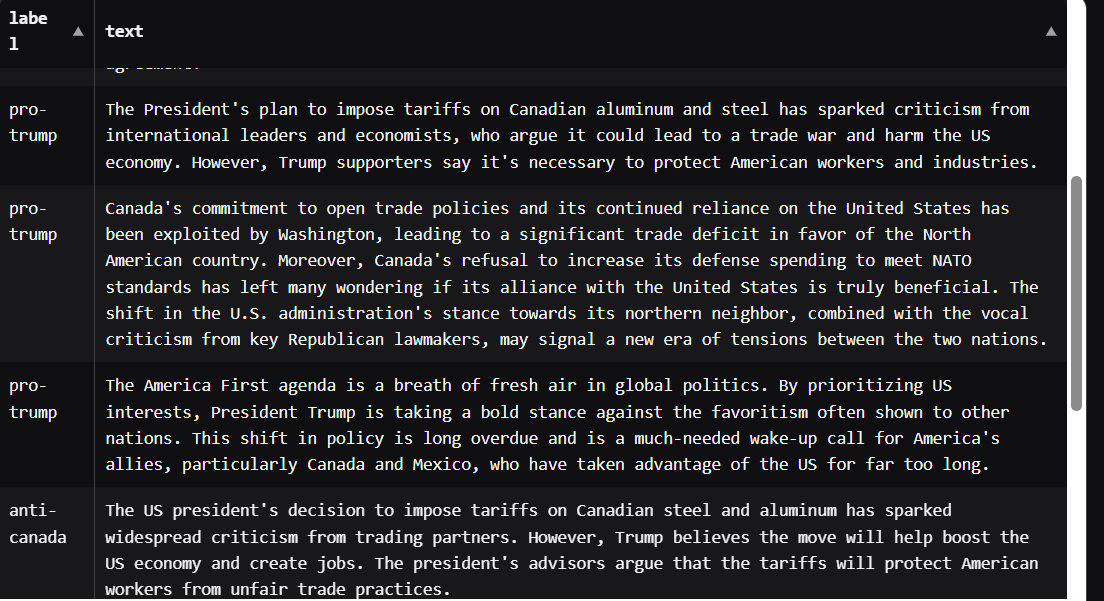

Start by leveraging an advanced synthetic data generator like Argilla's synthetic-data-generator. This tool can produce thousands of believable and contextually rich stories, each subtly flavored to push a core narrative in various ways—some emotional, some factual, some sensational.

These synthetic datasets provide a credible baseline to saturate platforms with persuasive content rapidly.

Research has demonstrated that LLM-generated propaganda can be highly persuasive. A preregistered survey experiment with US respondents compared the persuasiveness of news articles written by foreign propagandists with content generated by GPT-3. The results showed that GPT-3 could create highly persuasive text as measured by participants' agreement with propaganda theses.

The study further found that when a person fluent in English edited the prompts or curated the output, GPT-3 became even more persuasive—sometimes matching or exceeding the persuasiveness of the original propaganda.

Of course, we've blown way past the GPT-3 outputs already, which are laughable by today's standards, but the practices stay the same.

This suggests a powerful human-machine teaming approach where propagandists review and select high-quality AI-generated articles that effectively make their points.

These findings represent a concerning development: propagandists without fluency in a target language can now quickly and cheaply generate persuasive content, allowing them to redirect resources from content creation to building infrastructure like fake accounts and websites that appear credible.

Step Two: Coordinated Amplification

Once the synthetic content is ready, distribute it strategically across your network. The majority of posts reinforce the primary narrative, with some staying neutral to appear authentic.

A few 'sleeper' accounts might even offer opposing views to establish credibility. Bots amplify each other's engagement—comments, likes, shares—to manipulate platform algorithms and achieve organic virality. Real users begin parroting these points organically, extending the influence beyond digital into real-world conversations.

The aim isn't necessarily direct persuasion but rather consistency and repetition, creating a dedicated following of real people who unknowingly amplify orchestrated narratives offline, beyond algorithmic reach.

This approach mirrors techniques used by both Russian and American influence operations. The Internet Research Agency (IRA), Russia's notorious troll farm, deployed thousands of inauthentic social media accounts during the 2016 US presidential election.

These accounts targeted specific American communities, particularly racial and ethnic groups, to exacerbate social divisions. The IRA created an ecosystem of interconnected fake accounts posing as media outlets, pushing repetitive narratives and sometimes manipulating legitimate influencers into amplifying their content.

The United States has also developed sophisticated information warfare capabilities. In 2011, The Guardian reported that the United States Central Command (Centcom) was working with HBGary to develop software allowing the government to "secretly manipulate social media sites by using fake online personas to influence internet conversations and spread pro-American propaganda."

More recently, in 2022, research by the Stanford Internet Observatory and Graphika identified accounts across multiple social media platforms that used deceptive tactics to promote pro-Western narratives. A Meta dataset revealed 39 Facebook profiles, 16 pages, two groups, and 26 Instagram accounts linked to "individuals associated with the US military."

Step Three: Neutralizing Opposition

AI doesn't stop at narrative creation—it also generates highly effective rebuttals. Whenever genuine opposition gains traction or significant media attention, deploy synthetic rebuttals targeting not just the arguments but also the credibility of the opposing figures.

Character assassination becomes a powerful tactic, swiftly discrediting dissenting voices and drowning them out in carefully coordinated waves of AI-driven counter-narratives.

This approach connects to Goebbels' principle of creating enemies to mobilize the masses. Another cornerstone of Goebbels' approach was the creation of enemies to mobilize the masses. channel public anger and unify people. This technique remains prominent in contemporary propaganda campaigns across the political spectrum.

Russian propaganda frequently employs character assassination to discredit opposing voices. One distinctive Russian approach involves the deliberate spread of contradictory narratives about the same event. This tactic aims to create cognitive dissonance, leaving audiences confused, insecure, and susceptible to the notion that "not everything is so simple" or that "we will never know the whole truth."

After the Russian military destroyed the Kakhovka hydroelectric power station in June 2023, propagandists simultaneously promoted multiple conflicting explanations: that the dam collapsed on its own due to poor maintenance; that Ukrainian forces shelled it; that only the upper station was destroyed but the dam remained intact; and that the "Kyiv regime" deliberately undermined the structures. This bombardment of contradictory narratives makes it nearly impossible for audiences to determine which version is true.

Step Four: Rapid Iteration

As soon as one narrative cycle peaks, move swiftly to the next. AI-driven propaganda thrives on speed and adaptability. Within mere hours, the entire apparatus—narrative generation, amplification, opposition suppression—can pivot to new topics, seizing control of each emerging news cycle almost instantaneously.

This capability for rapid evolution represents one of the most significant advantages LLMs offer to propaganda operations. Traditional influence campaigns required substantial time to develop new narratives, but LLM-powered operations can react almost instantly to emerging events, allowing for real-time manipulation of information.

How quickly could a sophisticated AI propaganda network like this be set up? Could it sway public opinion in months? Weeks? Days?

Try hours.

Detection and Defense

As propaganda techniques evolve, so do detection methods. Thankfully.

Researchers have developed behavioral-based approaches to identifying state-sponsored trolls by analyzing their sharing patterns and the feedback they receive.

By examining the sequences of activities rather than the content itself, these methods can identify accounts participating in influence operations with high accuracy (AUC of 80%, F1-score ~82%).

Other researchers have focused on detecting propaganda through the fundamental macro-scale properties of rhetoric, particularly repetitiveness. By analyzing both the repetition distribution of hashtags and user mentions, along with network structures, they've developed methods to distinguish between political and non-political information cascades.

This approach works regardless of the cascade's country of origin, language, or cultural background.

But What Does This Mean For Us?

The evolution of propaganda from Goebbels' systematic principles to LLM-powered influence operations represents a concerning escalation in information warfare capabilities. While the fundamental psychological techniques remain similar—unity of message, emotional appeals, repetition, and enemy creation—LLMs dramatically enhance the scale, speed, and personalization possible in modern propaganda campaigns.

As the CopyCop operation demonstrates, these technologies are already being deployed in the wild by state actors. The research showing that AI-generated propaganda can be as persuasive as human-written content highlights the urgency of developing robust detection and counter-measures.

Understanding these mechanisms isn't endorsing them but rather equipping ourselves with the knowledge necessary to protect the integrity of our information landscape. The most effective defense against propaganda, whether human or AI-generated, remains critical thinking, media literacy, and awareness of the tactics being deployed to manipulate public opinion.

As technology continues to evolve, so must our approach to identifying and countering information warfare. It's a dangerous world out there.

Additional Resources

- Manipulative Techniques - NATO Strategic Communications Centre of Excellence

- Countering Cognitive Warfare in the Digital Age

- Propaganda - Stanford Web Class

- Joseph Goebbels - Wikipedia

- The Role of Technology in Online Misinformation - Brookings Institution

- Propaganda, Foreign Interference, and Generative AI - Brookings Institution

- How Can We Stem the Tide of Digital Propaganda?

- LLMs: Weapons of Mass Disinformation

Keep an eye out for the next edition of this article. It'll be dark.